Article: Thermodynamics of prediction

Susanne Still,

David A. Sivak,

Anthony J. Bell,

Gavin E. Crooks, Phys. Rev. Lett., 109, 120604 (2012)

Susanne Still,

David A. Sivak,

Anthony J. Bell,

Gavin E. Crooks, Phys. Rev. Lett., 109, 120604 (2012)

[ Full Text | Journal | Press | arXiv ]

Hat Trick! And Editors’ Suggestion. Also a Nature News item by Philip Ball.

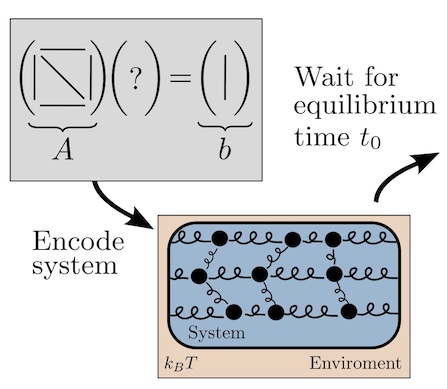

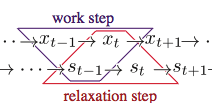

This paper is a melding of ideas about machine learning from Susanne Still and Tony Bell, with ideas from David and I about nonequilibrium thermodynamics. For a molecular scale machine with information processing capabilities, there’s a tradeoff between thermodynamic efficiency, memory and prediction. A prodigious memory allows more accurate prediction of the future, which can be exploited to reduce dissipation. But the persistence of memory is a liability, since information erasure leads to increased dissipation. A thermodynamically optimal machine must balance memory versus prediction by minimizing its nostalgia, the useless information about the past.

Abstract A system responding to a stochastic driving signal can be interpreted as computing, by means of its dynamics, an (implicit) model of the environmental variables. The system’s state retains information about past environmental fluctuations, and a fraction of this information is predictive of future ones. The remaining nonpredictive information reflects model complexity that does not improve predictive power, and represents the ineffectiveness of the model. We expose the fundamental equivalence between this model inefficiency and thermodynamic inefficiency, measured by the energy dissipated during the interaction between system and environment. Our results hold arbitrarily far from thermodynamic equilibrium and are applicable to a wide range of systems, including biomolecular machines. They highlight a profound connection between the effective use of information and efficient thermodynamic operation: any system constructed to keep memory about its environment and to operate energetically efficiently has to be predictive.